System-level problems are often indicated in repairs with replaced components, with failed parts tested showing No Trouble Found. Some aspects of this difficult and widespread problem are caused by system-level failures due to unexpected interactions among elements of the system (rather than component-level issues). We examine this NTF issue by the automated analysis or mining of repair and maintenance data generated by automotive service technicians.

Not every issue can be foreseen nor assessed at the design & development stages, both due to cost factors and the multiplicity of interactions in a system; recourse must be taken to address problems seen after the fact of design, manufacture, and actual use. The faster problems and their root cause(s) are identified, the sooner can the corrective actions be taken. Data analyses used may lead to root cause identification and solutions, which ultimately result in engineering design modifications.

To fix the immediate symptoms in vehicle repairs, often, the most obvious misbehaving components get replaced even though this approach may not fix the root cause(s). This leads to repeat failures (i.e., not Fixed Right First Time), lower customer satisfaction, higher overall costs, and NTF for replaced components if and when tested. These various problems can be addressed by using data that is already available, but remain considerably under-utilized.

Example: For a vehicle model, the fuses were blowing on instrument-panel wiring harnesses. Replaced fuses, and sometimes the harness, did not help reduce repeat visits for that same issue. The root cause was traced rapidly by data analysis to windshield issues: Rain-water was leaking into the fuse-box. The data analysis first extracted detailed information from the technician text narratives — which identified water leakage, among other aspects, in individual records — and second, found co-occurrence patterns within the ensemble of records for water in the fuses.

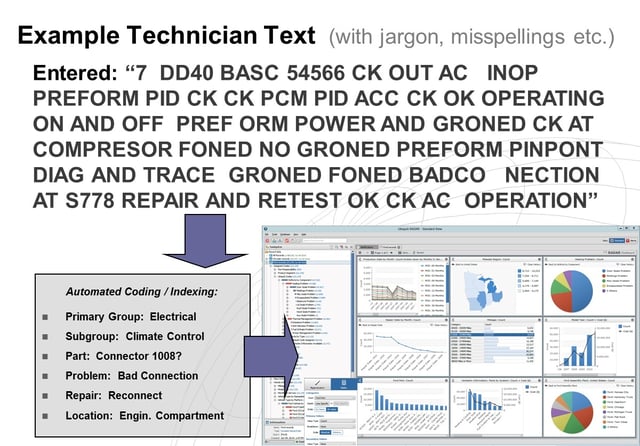

Figure 1: Extracting detailed information from verbatim technician text narratives to enable analytics

Data analysis may be the most effective and efficient solution to identify common system-level problems because it provides a cheaper, centralized means — without a need to transport physical components or having analysts travel to repair locations. Data analytics for NTF issues include some inherently complex computing, together with the extraction of detailed information from messy verbatim technician text narrative comments data. Both compute-intensive techniques as well as the extraction of detailed information from text narratives have become increasingly feasible using powerful new hardware and software now commonly available. It is now possible to set up Early Warning Systems to help reduce the costs and risks associated with NTF issues.

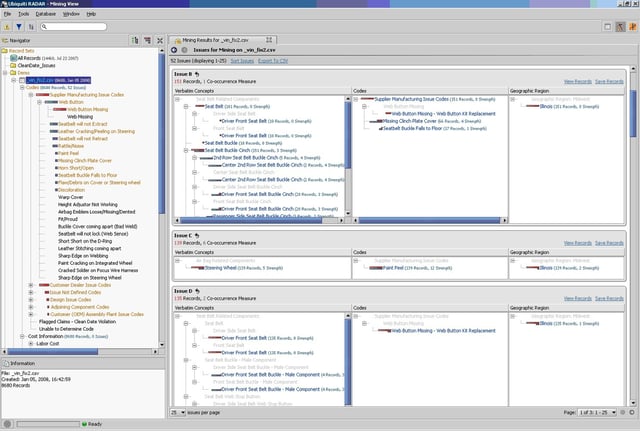

Simple analytical techniques provide clues to system-level issues, as in our earlier example case, may be applied to tabular records-oriented service data for maintenance and repairs as follows: Frequent intra-record co-occurrences, using a relatively simple technique, may reveal that fuses blow when leakage is present; inter-recordset (dis-)similarities may indicate that blown fuses are unevenly distributed among various leakage issues, in certain geographic areas and/or during the rainy seasons; and inter-record/intra-set sequences might show that fuses blow around the time that leakage repairs happen. These techniques use appropriate statistical metrics and enable use of previously learned automotive repairs information that is often available.

Figure 2: Example co-occurrences in service records where each potential “issue” is listed horizontally

The previous paragraphs describe aspects of our upcoming presentation titled “Analyzing Data for Systems vs. Components” at the 2016 AIAG Quality Summit, September 29-30, 2016 at the Suburban Collection Showcase in Novi, Michigan. (This material is partially based upon work supported by the National Science Foundation under Award Number: IIP-0712385. Any opinions, findings, and conclusions or recommendations expressed in this publication are those of the author(s) and do not necessarily reflect the views of the National Science Foundation.)

About the Authors

Dr. Keith Thompson is involved with software and services at Ubiquiti and has two decades of experience in product design, process development, and quality control for several industries. At Ubiquiti, he leads the research, design, and development of its high-end information analysis technologies, and is the main technical liaison for client services. Formerly, he held senior technical positions at Ford Motor Company and led the development of improved methods in design and manufacturing. He has pending patents, technical achievement awards, and numerous published papers.

Dr. Nandit Soparkar helps in business and technical development at Ubiquiti. A former tenure-track professor in the College of Engineering at University of Michigan, Ann Arbor, he has advised start-up software companies, several now within public-traded companies. He received the National Science Foundation Career (i.e., Presidential Young Investigator) Award and worked at the AT&T Bell Labs at Murray Hill and the IBM Watson Research Center in New York. He has published & pending books, patents, research papers, and has peer-reviewed research efforts.